Beyond the Lakehouse: Engineering Data Architectures for the Age of Agentic AI

This article discusses the evolution of data architectures from traditional models to Lakehouse and LakeDB, focusing on their role in supporting agentic AI. It highlights the importance of governance, security, and scalability in enabling autonomous systems.

In 2025, the conversation around artificial intelligence has entered a new phase. Agentic AI, autonomous systems capable of acting on data without constant human intervention, is moving from experimental research into enterprise pilots. The promise is immense, but so is the challenge. While most organizations are eager to adopt AI, few have the data architectures robust enough to sustain it. Traditional data lakes are too loose, and conventional warehouses are too rigid. The emerging Lakehouse model offered a middle ground, and now discussions around LakeDB point to the next architectural frontier: systems designed not just to store and serve data, but to empower autonomy.

AI-generated summary, reviewed by editors

At the center of these shifts are data leaders who have spent years translating architectural complexity into enterprise-scale solutions. Saradha Nagarajan, Senior IEEE Member and seasoned data engineer with more than 15 years of experience, is recognized for her expertise in building scalable, future-ready systems that balance innovation with reliability. As she puts it:

“Models get all the attention, but architecture decides whether AI survives in the enterprise,” Saradha reflects.

Why the Lakehouse Became the Standard

The industry’s move to the Lakehouse model was born out of necessity. Data lakes offered scalability, but without governance or reliability. Data warehouses provided structure, but at high cost and limited flexibility. The Lakehouse combined the best of both, low-cost storage, compatibility with unstructured and structured data, and the addition of warehouse-grade features like ACID transactions, schema enforcement, and fine-grained security.

State of the Data Lakehouse 2025 Report confirms that Lakehouse has become the de facto enterprise standard, powering AI initiatives by allowing raw and curated data to coexist under a single, governed architecture. Yet as enterprises begin testing agentic AI, even Lakehouses show their limits. These new autonomous systems require architectures that not only deliver clean data but act on governance, security, and responsiveness in real time.

Enter LakeDB: A Blueprint for Agentic AI

The next wave in enterprise data infrastructure is already being discussed: LakeDB. Unlike Lakehouses, which primarily manage how data is stored and accessed, LakeDB introduces a more autonomous, database-like model. It envisions systems capable of self-managing pipelines, enforcing governance automatically, and enabling AI agents to interact responsibly with enterprise data.

Key features, like transaction consistency at scale, real-time cataloging, and policy-driven governance, make LakeDB an essential evolution for agentic AI. While still emerging as a concept, early adopters are treating it as a blueprint for future readiness.

“The next leap is not just serving data faster,” Saradha notes, “it is building systems that can act on their own, securely, transparently, and at scale.”

Scalable Data Lake & Lakehouse Architecture for AI Enablement

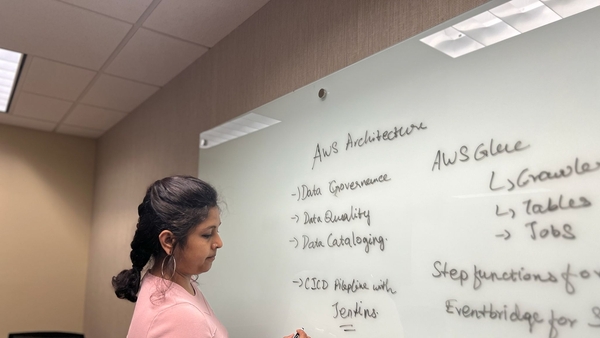

Saradha’s work illustrates how these ideas are taking root in practice. In early 2024, she led the design of a scalable AWS-based data lake, which has since evolved into a Snowflake Lakehouse powered by Iceberg and Medallion architecture.

This initiative migrated 13 enterprise datasets from SAP HANA into AWS, built modular pipelines using Glue, Athena, Lambda, and Step Functions, and introduced role-based security with curated data catalogs. The architecture now supports business self-service analytics through caching and writeback capabilities, all while ensuring strict governance.

The impact has been transformative, resulting in a 40% improvement in pipeline scalability with reduced latency, the enablement of machine learning-driven decision-making across warranty conversion, contract renewal, and sales workflows, and the empowerment of business teams with secure, curated access to data. It has also laid the foundation for agentic AI systems and analytics automation.

The project stands as a first-of-its-kind architecture within the company, positioned not only as a backbone for analytics but as a critical enabler for AI-driven revenue streams.

“We designed with a simple premise: if tomorrow’s AI agents needed to act on this data, the architecture should already support it,” Saradha explains

Governance, Security, and Standards in AI-Ready Architecture

If agentic AI represents autonomy, governance represents accountability. Enterprises adopting autonomous systems must ensure that every action is traceable, explainable, and secure. Saradha has been at the forefront of this balance, as a Cybersecurity Awards Judge where she evaluates emerging solutions not just for innovation, but for resilience against misuse.

Industry analysts emphasize that governance and security are now inseparable from data architecture. Regulatory frameworks such as the EU AI Act and the U.S. AI Bill of Rights are pushing enterprises to demonstrate accountability in how AI systems consume and act on data. This shift means that technology leadership is no longer just about engineering performance, but about embedding trust and compliance as core design principles.

“Autonomy without accountability is chaos,” she emphasizes. “The architectures we build for agentic AI must prove not only that they work, but that they can be trusted.”

Looking Ahead: The Architecture of Autonomy

As enterprises continue their migration journeys, the question is no longer whether AI will play a role, it is whether the data backbone is strong enough to support autonomy. Early Lakehouse adoption laid a foundation, but LakeDB-style architectures represent the next logical step, providing enterprises with the agility, auditability, and intelligence to support autonomous systems responsibly.

Saradha believes the role of data engineers is shifting from pipeline builders to architects of autonomy. They will not only be tasked with building scalable systems, but also with embedding accountability, explainability, and resilience into every layer of architecture.

Agentic AI demands a new kind of data backbone, one that is scalable, governed, and capable of collaborating with autonomous systems. The conversation around LakeDB signals this next frontier. For enterprises, the challenge will not be adopting AI models, but ensuring their architectures are ready for autonomy. For leaders like Saradha Nagarajan, the path forward is already clear: design not just for today’s analytics, but for tomorrow’s intelligence.

“The best data platforms will not just support AI,” she says. “They will collaborate with it, responsibly, transparently, and at scale.”

Click it and Unblock the Notifications

Click it and Unblock the Notifications